Interpreters, Compilation, and Concurrency Tooling in PLAS at Kent

Here at Kent, we have a large group of researchers working on Programming Languages and Systems (PLAS), and within this group, we have a small team focusing on research on interpreters, compilation, and tooling to make programming easier.

It’s summer 2021, and I felt it’s time for a small inventory of the things we are up to. At this very moment, the team consists of Sophie, Octave, and myself. I’ll include Dominik and Carmen, as well, for bragging rights. Though, they are either finishing the PhD or just recently defended it.

If you find this work interesting, perhaps you’d like to join us for a postdoc?

Utilizing Program Phases for Better Performance

Sophie recently presented her early work at the ICOOOLPS workshop. Modern language virtual machines do a lot of work behind the scenes to understand what our programs are doing.

However, techniques such as inlining and lookup caches have limitations. While one could see inlining as a way to give extra top-down context to a compilation, it’s inherently limited because of the overhead of run-time compilation and excessive code generation.

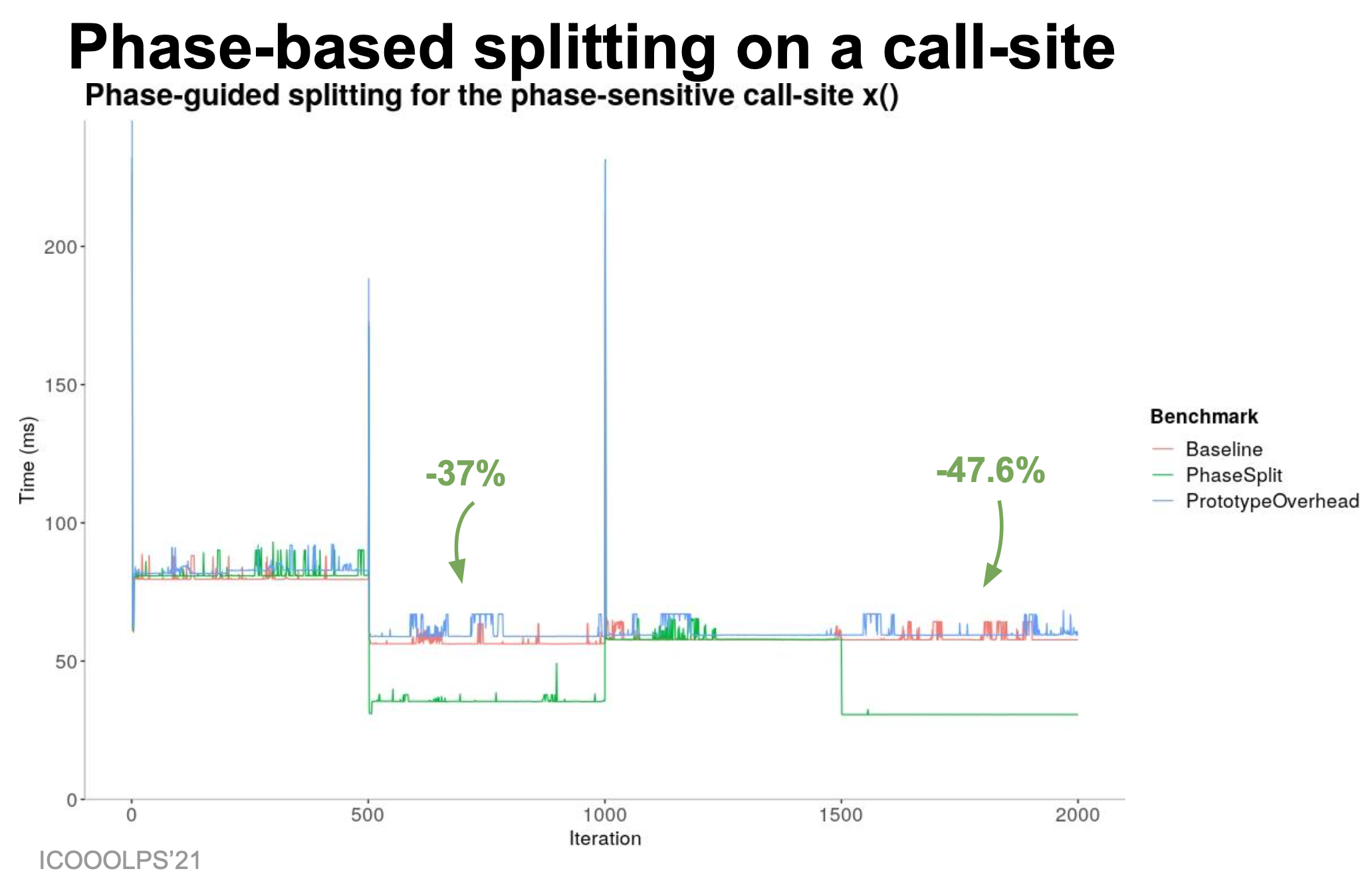

To bring top-down context more explicitly into these systems, Sophie explores the notion of execution phases. Programs often do different things one after another, perhaps first loading data, then processing it, and finally generating output. Our goal is to utilize these phases, to help compilers produce better code for each of them. To give just one example, here a bit from one of Sophie’s ICOOOLPS slides:

The green line there is the microbenchmark with phase-based splitting enabled, giving a nice speedup for the second and fourth phase, benefitting from monomorphization and only compiling whats important for the phase, and not for the whole program.

Sophie has already shown that there is potential, and the discussions at ICOOOLPS lead to a number of new ideas for experiments, but it’s still early days.

Generating Benchmarks to Avoid the Tiny-Benchmark Trap

In some earlier blog posts and a talk at MoreVMs’21 (recording), I argued that we need better benchmarks for our language implementations. It’s a well known issue that the benchmarks that academia uses for research are rarely a good representation of what real systems do. Often, simply because they are tiny. Thus, I want to be able to monitor a system in production and then generate benchmarks that can be freely shared with other researchers from the behavior that we saw. Octave is currently working on such a system to generate benchmarks from abstract structural and behavioral data about an application.

It’s a long way, and Octave currently instruments Java applications, record what they do at run time, and generate benchmark with similar behavior from that. Given that Java isn’t the smallest language, there’s a lot to be done, but I hope we’ll have a first idea of whether this could work by the end of the summer 🤞🏻

Reproducing Nondeterminism in Multiparadigm Concurrent Programs

Dominik is currently writing up his dissertation on reproducing nondeterminism. His work is essentially all around tracing and record & replay of concurrent systems. We wrote a number of papers [1, 2, 3] which developed efficient ways of doing this first just for actor programs, and then for various other high-level concurrency models. The end result allows us to record & replay programs that combine various concurrency models, with very low overhead. This is the kind of technology that is needed to reliably debug concurrency issues, and perhaps in the future even allow for automatic mitigation!

Advanced Debugging Techniques to Handle Concurrency Bugs in Actor-based Applications

Last month, Carmen successfully defended her PhD on debugging techniques for actor-based applications. This work focused on the user-side of debugging. First, we built a debugger for all kind of concurrency models, looked at what kind of bugs we should worry about, and finally she did a user study on a new debugger she built to address these issues. We also worked on enabling the exploration of all possible executions and concurrency bugs of a program. The Voyager Multiverse Debugger has a nice demo created by our collaborators, which shows that we can navigate all possible executions paths of non-deterministic programs:

Preventing Concurrency Issues, Automatically, At Run Time

While all these projects are very dear to my heart, there’s one, I’d really love to make more progress on as well: automatically preventing concurrency issues from causing harm.

We are looking for someone to join our team!

If you are interested in programming language implementation and concurrency, please reach out! We have a two-year postdoc position here at Kent in the PLAS group, and you would join Sophie, Octave, and me to work on interesting research. In the project, we’ll continue to collaborate also with Prof. Gonzalez Boix and her DisCo research group in Brussels (Belgium), Prof. Mössenböck in Linz (Austria), and the GraalVM team of Oracle Labs, which includes the opportunity for research visits.

Our team is well connect for instance also with Shopify, which supports a project on improving warmup and interpreter performance of GraalVM languages.

I head the

I head the